Data transformations in Container insights

This article describes how to implement data transformations in Container insights. Transformations in Azure Monitor allow you to modify or filter data before it's ingested in your Log Analytics workspace. They allow you to perform such actions as filtering out data collected from your cluster to save costs or processing incoming data to assist in your data queries.

Important

The articles Configure log collection in Container insights and Filtering log collection in Container insights describe standard configuration settings to configure and filter data collection for Container insights. You should perform any required configuration using these features before using transformations. Use a transformation to perform filtering or other data configuration that you can't perform with the standard configuration settings.

Data collection rule

Transformations are implemented in data collection rules (DCRs) which are used to configure data collection in Azure Monitor. Configure data collection using DCR describes the DCR that's automatically created when you enable Container insights on a cluster. To create a transformation, you must perform one of the following actions:

- New cluster. Use an existing ARM template to onboard an AKS cluster to Container insights. Modify the DCR in that template with your required configuration, including a transformation similar to one of the samples below.

- Existing DCR. After a cluster has been onboarded to Container insights and data collection configured, edit its DCR to include a transformation using any of the methods in Editing Data Collection Rules.

Note

There is currently minimal UI for editing DCRs, which is required to add transformations. In most cases, you need to manually edit the the DCR. This article describes the DCR structure to implement. See Create and edit data collection rules (DCRs) in Azure Monitor for guidance on how to implement that structure.

Data sources

The dataSources section of the DCR defines the different types of incoming data that the DCR will process. For Container insights, this is the Container insights extension, which includes one or more predefined streams starting with the prefix Microsoft-.

The list of Container insights streams in the DCR depends on the Cost preset that you selected for the cluster. If you collect all tables, the DCR will use the Microsoft-ContainerInsights-Group-Default stream, which is a group stream that includes all of the streams listed in Stream values. You must change this to individual streams if you're going to use a transformation. Any other cost preset settings will already use individual streams.

The sample below shows the Microsoft-ContainerInsights-Group-Default stream. See the Sample DCRs for samples using individual streams.

"dataSources": {

"extensions": [

{

"streams": [

"Microsoft-ContainerInsights-Group-Default"

],

"name": "ContainerInsightsExtension",

"extensionName": "ContainerInsights",

"extensionSettings": {

"dataCollectionSettings": {

"interval": "1m",

"namespaceFilteringMode": "Off",

"namespaces": null,

"enableContainerLogV2": true

}

}

}

]

}

Data flows

The dataFlows section of the DCR matches streams with destinations that are defined in the destinations section of the DCR. Table names don't have to be specified for known streams if the data is being sent to the default table. The streams that don't require a transformation can be grouped together in a single entry that includes only the workspace destination. Each will be sent to its default table.

Create a separate entry for streams that require a transformation. This should include the workspace destination and the transformKql property. If you're sending data to an alternate table, then you need to include the outputStream property which specifies the name of the destination table.

The sample below shows the dataFlows section for a single stream with a transformation. See the Sample DCRs for multiple data flows in a single DCR.

"dataFlows": [

{

"streams": [

"Microsoft-ContainerLogV2"

],

"destinations": [

"ciworkspace"

],

"transformKql": "source | where PodNamespace == 'kube-system'"

}

]

Sample DCRs

Filter data

The first example filters out data from the ContainerLogV2 based on the LogLevel column. Only records with a LogLevel of error or critical will be collected since these are the entries that you might use for alerting and identifying issues in the cluster. Collecting and storing other levels such as info and debug generate cost without significant value.

You can retrieve these records using the following log query.

ContainerLogV2 | where LogLevel in ('error', 'critical')

This logic is shown in the following diagram.

In a transformation, the table name source is used to represent the incoming data. Following is the modified query to use in the transformation.

source | where LogLevel in ('error', 'critical')

The following sample shows this transformation added to the Container insights DCR. Note that a separate data flow is used for Microsoft-ContainerLogV2 since this is the only incoming stream that the transformation should be applied to. A separate data flow is used for the other streams.

{

"properties": {

"location": "eastus2",

"kind": "Linux",

"dataSources": {

"syslog": [],

"extensions": [

{

"streams": [

"Microsoft-ContainerLogV2",

"Microsoft-KubeEvents",

"Microsoft-KubePodInventory"

],

"extensionName": "ContainerInsights",

"extensionSettings": {

"dataCollectionSettings": {

"interval": "1m",

"namespaceFilteringMode": "Off",

"enableContainerLogV2": true

}

},

"name": "ContainerInsightsExtension"

}

]

},

"destinations": {

"logAnalytics": [

{

"workspaceResourceId": "/subscriptions/00000000-0000-0000-0000-000000000000/resourcegroups/my-resource-group/providers/microsoft.operationalinsights/workspaces/my-workspace",

"workspaceId": "00000000-0000-0000-0000-000000000000",

"name": "ciworkspace"

}

]

},

"dataFlows": [

{

"streams": [

"Microsoft-KubeEvents",

"Microsoft-KubePodInventory"

],

"destinations": [

"ciworkspace"

],

},

{

"streams": [

"Microsoft-ContainerLogV2"

],

"destinations": [

"ciworkspace"

],

"transformKql": "source | where LogLevel in ('error', 'critical')"

}

],

},

}

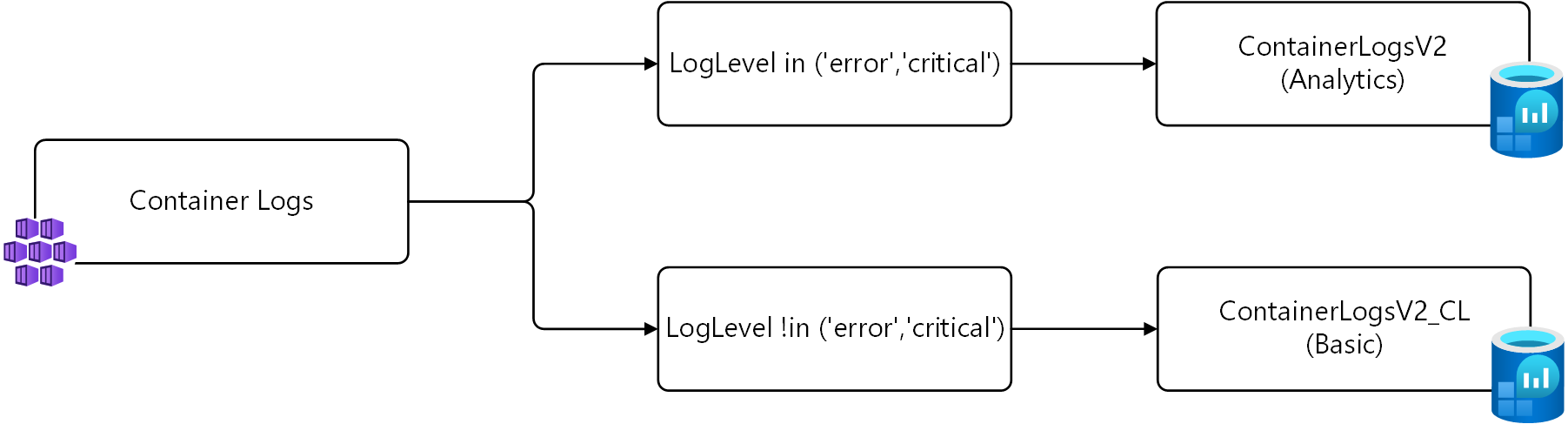

Send data to different tables

In the example above, only records with a LogLevel of error or critical are collected. An alternate strategy instead of not collecting these records at all is to save them to an alternate table configured for basic logs.

For this strategy, two transformations are needed. The first transformation sends the records with LogLevel of error or critical to the default table. The second transformation sends the other records to a custom table named ContainerLogV2_CL. The queries for each are shown below using source for the incoming data as described in the previous example.

# Return error and critical logs

source | where LogLevel in ('error', 'critical')

# Return logs that aren't error or critical

source | where LogLevel !in ('error', 'critical')

This logic is shown in the following diagram.

Important

Before you install the DCR in this sample, you must create a new table with the same schema as ContainerLogV2. Name it ContainerLogV2_CL and configure it for basic logs.

The following sample shows this transformation added to the Container insights DCR. There are two data flows for Microsoft-ContainerLogV2 in this DCR, one for each transformation. The first sends to the default table you don't need to specify a table name. The second requires the outputStream property to specify the destination table.

{

"properties": {

"location": "eastus2",

"kind": "Linux",

"dataSources": {

"syslog": [],

"extensions": [

{

"streams": [

"Microsoft-ContainerLogV2",

"Microsoft-KubeEvents",

"Microsoft-KubePodInventory"

],

"extensionName": "ContainerInsights",

"extensionSettings": {

"dataCollectionSettings": {

"interval": "1m",

"namespaceFilteringMode": "Off",

"enableContainerLogV2": true

}

},

"name": "ContainerInsightsExtension"

}

]

},

"destinations": {

"logAnalytics": [

{

"workspaceResourceId": "/subscriptions/00000000-0000-0000-0000-000000000000/resourcegroups/my-resource-group/providers/microsoft.operationalinsights/workspaces/my-workspace",

"workspaceId": "00000000-0000-0000-0000-000000000000",

"name": "ciworkspace"

}

]

},

"dataFlows": [

{

"streams": [

"Microsoft-KubeEvents",

"Microsoft-KubePodInventory"

],

"destinations": [

"ciworkspace"

],

},

{

"streams": [

"Microsoft-ContainerLogV2"

],

"destinations": [

"ciworkspace"

],

"transformKql": "source | where LogLevel in ('error', 'critical')"

},

{

"streams": [

"Microsoft-ContainerLogV2"

],

"destinations": [

"ciworkspace"

],

"transformKql": "source | where LogLevel !in ('error','critical')",

"outputStream": "Custom-ContainerLogV2_CL"

}

],

},

}

Next steps

- Read more about transformations and data collection rules in Azure Monitor.