Hello @Samrat ,

To investigate this issue future, could you please provide more information on the scenario:

- How exactly you are submitting the Spark jobs to Azure HDInsight?

- Are you following any article, if yes please do provide the link to the article, or please do share the exact steps?

- When you launch the YARN UI from the Ambari UI, are you able to see the application_id associated when you are submitting a spark job?

Meanwhile, you can checkout Debug Apache Spark jobs running on Azure HDInsight.

Can we Fetch the Spark Yarn log from Azure HDInsight programmatically. Do we have any REST call or custom tool in Azure to fetch the Yarn log

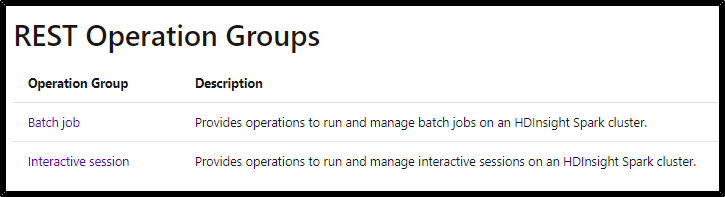

Use these APIs to submit a remote job to HDInsight Spark clusters. All task operations conform to the HTTP/1.1 protocol. Make sure you are authenticating with the Spark cluster management endpoint using HTTP basic authentication with your Spark administrator credentials.

Reference: Azure HDInsight Spark - Remote Job Submission REST API

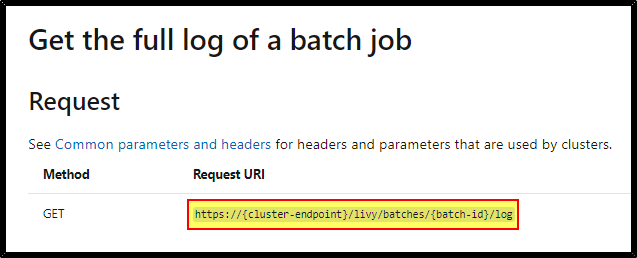

You can submit a GET to the livy endpoint in this format: https://<your_hdi_url>/livy/batches/<id of your job>/log

Reference: Get the full log of a batch job.

Hope this helps. Do let us know if you any further queries.

------------

- Please accept an answer if correct. Original posters help the community find answers faster by identifying the correct answer. Here is how.

- Want a reminder to come back and check responses? Here is how to subscribe to a notification.