Hello @Diogo Rodrigues ,

Welcome to the Microsoft Q&A platform.

Azure HDInsight Spark clusters provide kernels that you can use with the Jupyter Notebook on Apache Spark for testing your applications. A kernel is a program that runs and interprets your code. The three kernels are:

- PySpark - for applications written in Python2.

- PySpark3 - for applications written in Python3.

- Spark - for applications written in Scala.

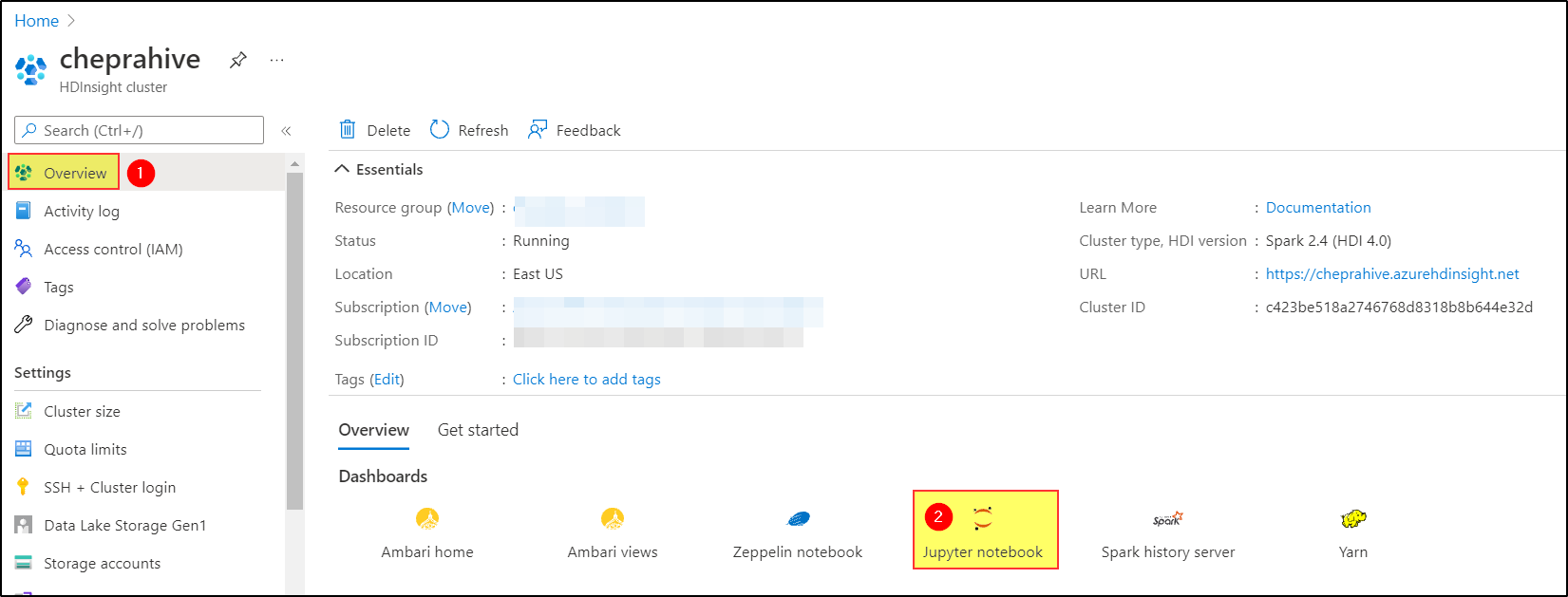

Once you create the Azure HDInsight Spark cluster.

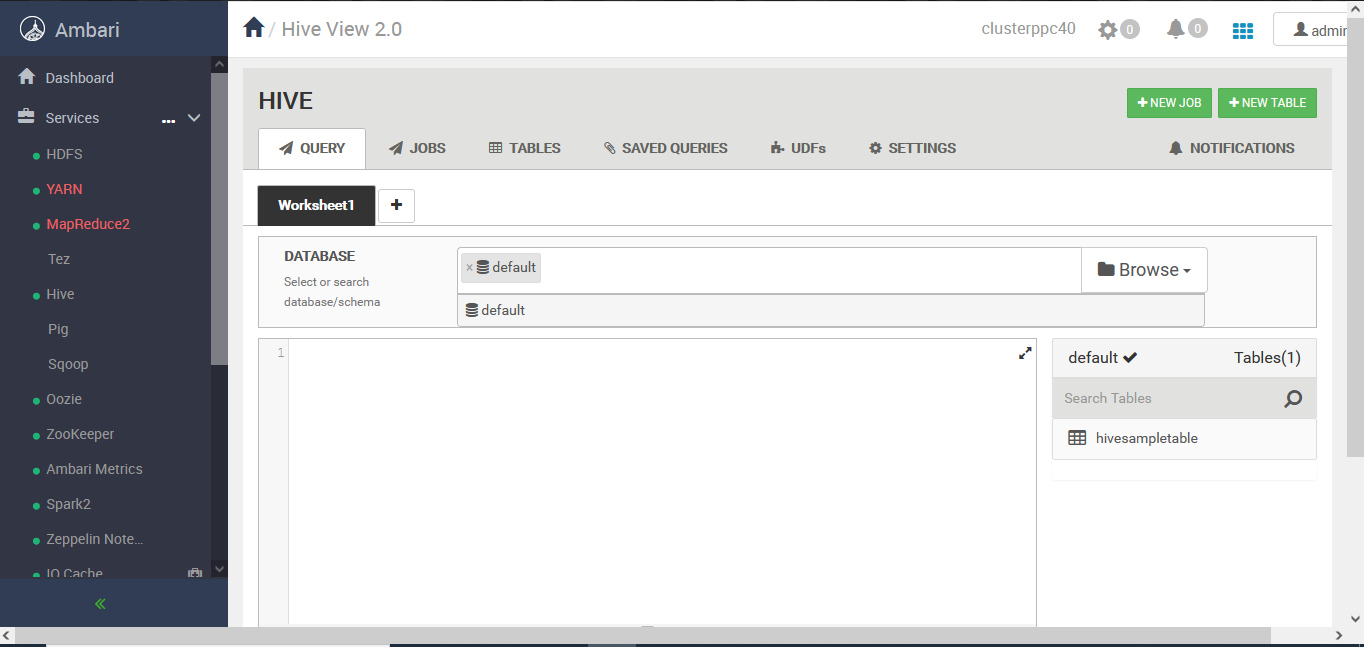

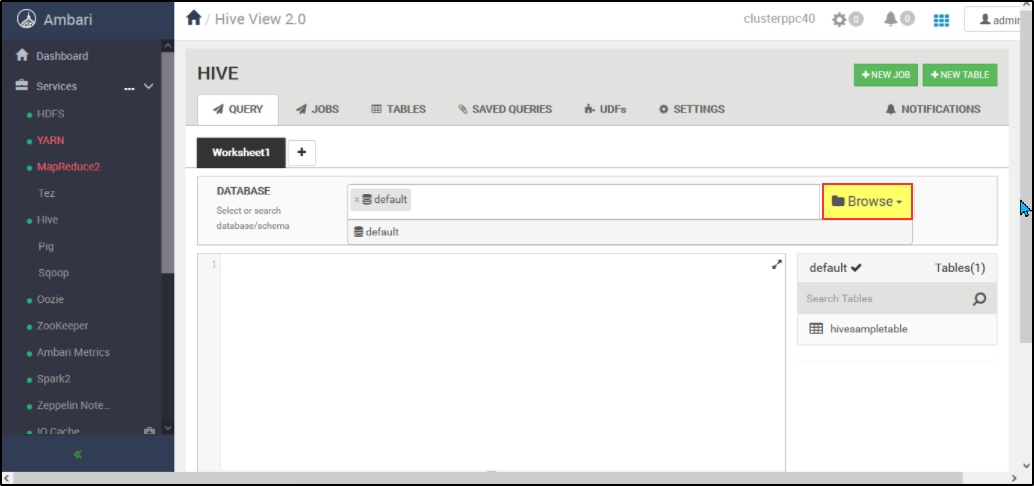

- From the Azure portal, select your Spark cluster. See List and show clusters for the instructions. The Overview view opens.

- From the Overview view, in the Cluster dashboards box, select Jupyter Notebook. If prompted, enter the admin credentials for the cluster.

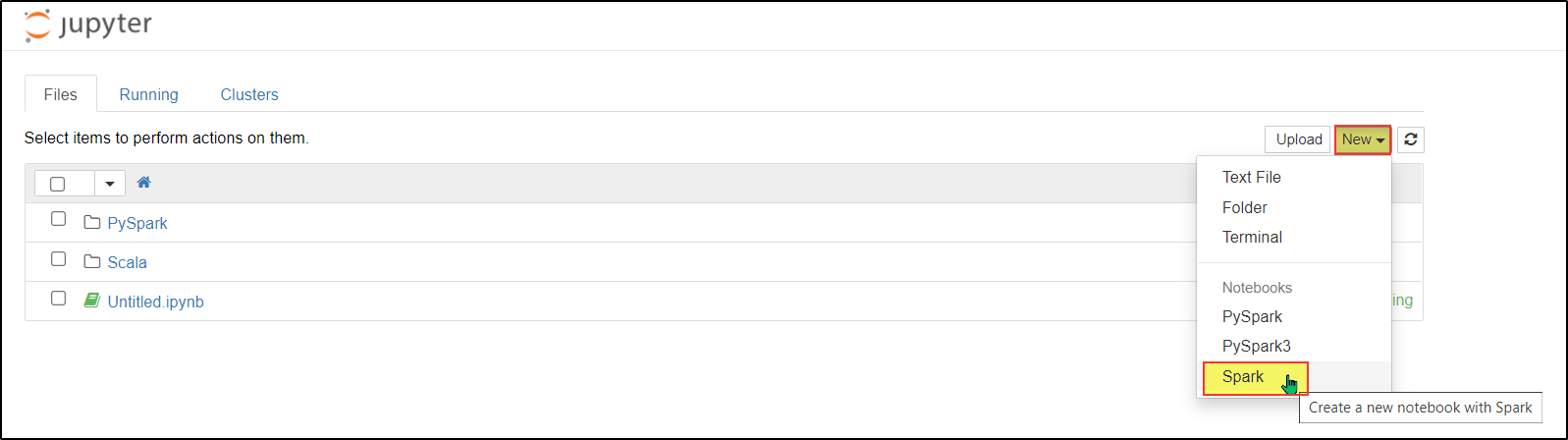

- Select New, and then select either Pyspark, PySpark3, or Spark to create a notebook. Use the Spark kernel for Scala applications, PySpark kernel for Python2 applications, and PySpark3 kernel for Python3 applications.

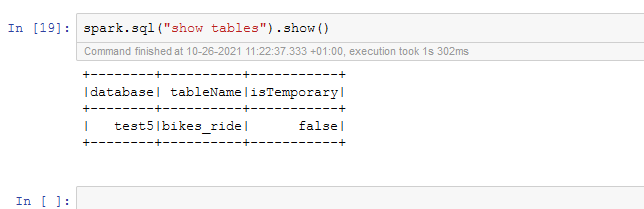

Now you can create hive databases and tables using Jupyter Notebook.

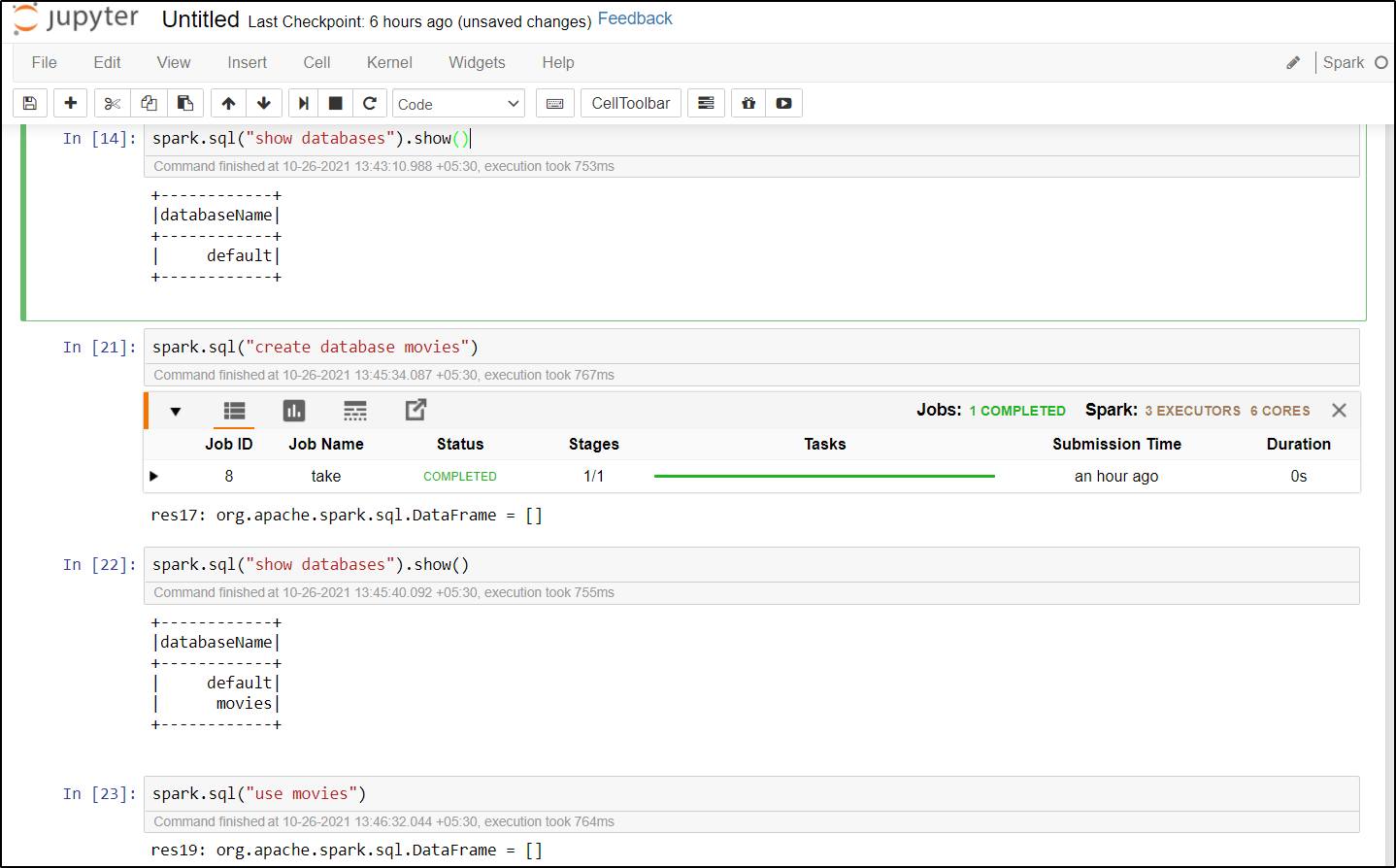

- Create a database of your choice

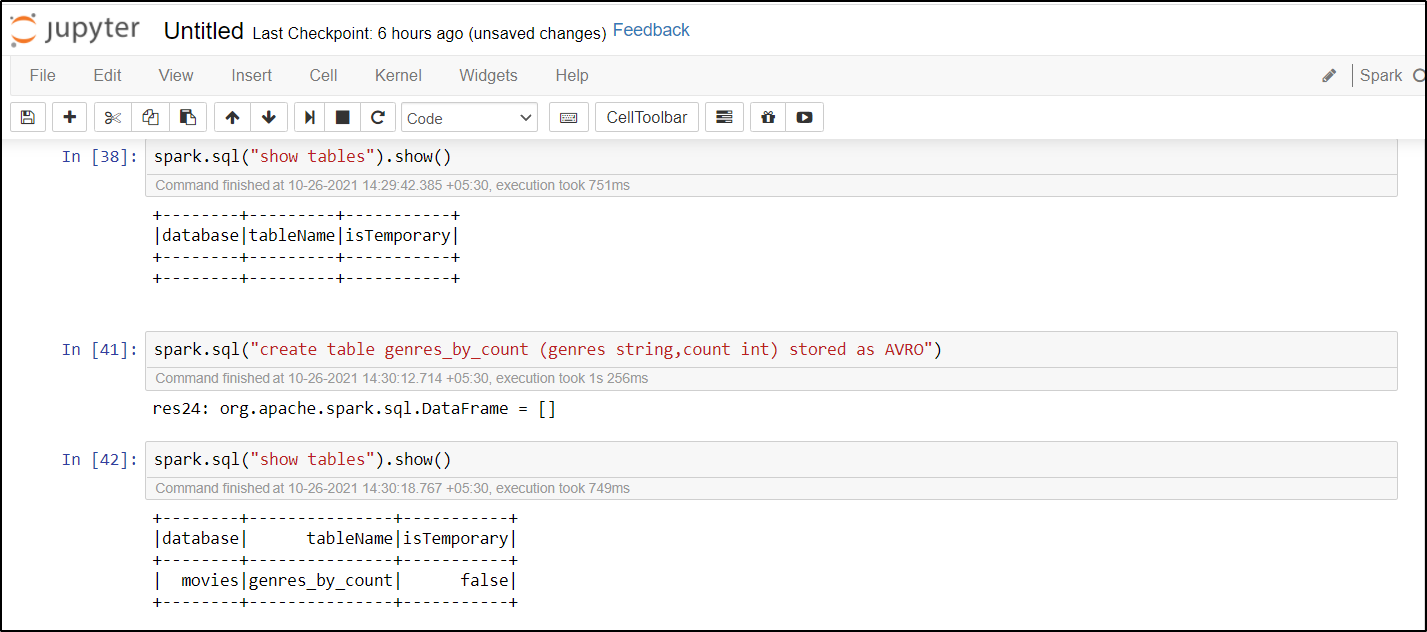

- Create a table of your choice

For more details refer to Kernels for Jupyter Notebook on Apache Spark clusters in Azure HDInsight.

And also please refer to the Hive manual for details on how to create tables and load/insert data into the tables.

Hope this will help. Please let us know if any further queries.

------------------------------

- Please don't forget to click on

or upvote

or upvote  button whenever the information provided helps you. Original posters help the community find answers faster by identifying the correct answer. Here is how

button whenever the information provided helps you. Original posters help the community find answers faster by identifying the correct answer. Here is how - Want a reminder to come back and check responses? Here is how to subscribe to a notification

- If you are interested in joining the VM program and help shape the future of Q&A: Here is how you can be part of Q&A Volunteer Moderators