Replicate multiple objects from SAP via SAP CDC

APPLIES TO:  Azure Data Factory

Azure Data Factory  Azure Synapse Analytics

Azure Synapse Analytics

Tip

Try out Data Factory in Microsoft Fabric, an all-in-one analytics solution for enterprises. Microsoft Fabric covers everything from data movement to data science, real-time analytics, business intelligence, and reporting. Learn how to start a new trial for free!

This article describes a solution template that you can use to replicate multiple ODP objects from SAP via SAP CDC connector to Azure Data Lake Gen2 in Delta format with key partition.

This template reads an external control file in json format on your storage store, which contains your SAP ODP contexts, SAP ODP objects and key columns from SAP source system as well as your containers, folders and partitions from Azure Data Lake Gen2 destination store. It then copies each of the SAP ODP object from SAP system to Azure Data Lake Gen2 in Delta format.

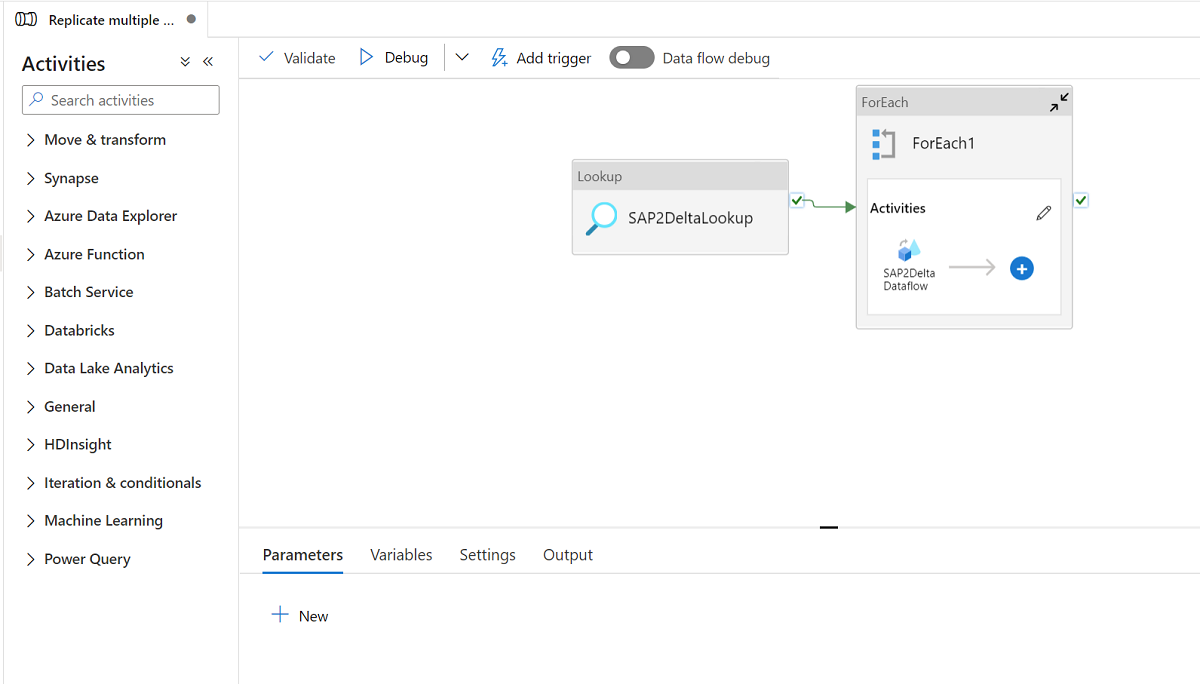

The template contains three activities:

- Lookup retrieves the SAP ODP objects list to be loaded and the destination store path from an external control file on your Azure Data Lake Gen2 store.

- ForEach gets the SAP ODP objects list from the Lookup activity and iterates each object to the mapping dataflow activity.

- Mapping dataflow replicates each SAP ODP object from SAP system to Azure Data Lake Gen2 in Delta format. It will do initial full load in the first run and then do incremental load in the subsequent runs automatically. It will merge the changes to Azure Data Lake Gen2 in Delta format.

An external control file in json format is required in this template. The schema for the control file is as below.

- checkPointKey is your custom key to manage the checkpoint of your changed data capture in ADF. You can get more details here.

- sapContext is your SAP ODP context from the source SAP system. You can get more details here.

- sapObjectName is your SAP ODP object name to be loaded from the SAP system. You can get more details here.

- sapRunMode is to determine how you want to load SAP object. It can be fullLoad, incrementalLoad or fullAndIncrementalLoad.

- sapKeyColumns are your key column names from SAP ODP objects used to do the dedupe in mapping dataflow.

- sapPartitions are list of partition conditions leading to separate extraction processes in the connected SAP system.

- deltaContainer is your container name in the Azure Data Lake Gen2 as the destination store.

- deltaFolder is your folder name in the Azure Data Lake Gen2 as the destination store.

- deltaKeyColumns are your columns used to determine if a row from the source matches a row from the sink when you want to update or delete a row.

- deltaPartition is your column used to create partitions for each unique value in such column to write data into Delta format on Azure Data Lake Gen2 via Spark cluster used by mapping dataflow. You can get more details here

A sample control file is as below:

[

{

"checkPointKey":"CheckPointFor_ZPERFCDPOS$F",

"sapContext": "ABAP_CDS",

"sapObjectName": "ZPERFCDPOS$F",

"sapRunMode": "fullAndIncrementalLoad",

"sapKeyColumns": [

"TABKEY"

],

"sapPartitions": [

[{

"fieldName": "TEXTCASE",

"sign": "I",

"option": "EQ",

"low": "1"

},

{

"fieldName": "TEXTCASE",

"sign": "I",

"option": "EQ",

"low": "X"

}]

],

"deltaContainer":"delta",

"deltaFolder":"ZPERFCDPOS",

"deltaKeyColumns":["TABKEY"],

"deltaPartition":"TEXTCASE",

"stagingStorageFolder":"stagingcontainer/stagingfolder"

},

{

"checkPointKey":"CheckPointFor_Z0131",

"sapContext": "SAPI",

"sapObjectName": "Z0131",

"sapRunMode": "incrementalLoad",

"sapKeyColumns": [

"ID"

],

"sapPartitions": [],

"deltaContainer":"delta",

"deltaFolder":"Z0131",

"deltaKeyColumns":["ID"],

"deltaPartition":"COMPANY",

"stagingStorageFolder":"stagingcontainer/stagingfolder"

}

]

Create and upload a control file into json format to your Azure Data Lake Gen2 as the destination store. The default container to store the control file is demo and default control file name is SapToDeltaParameters.json.

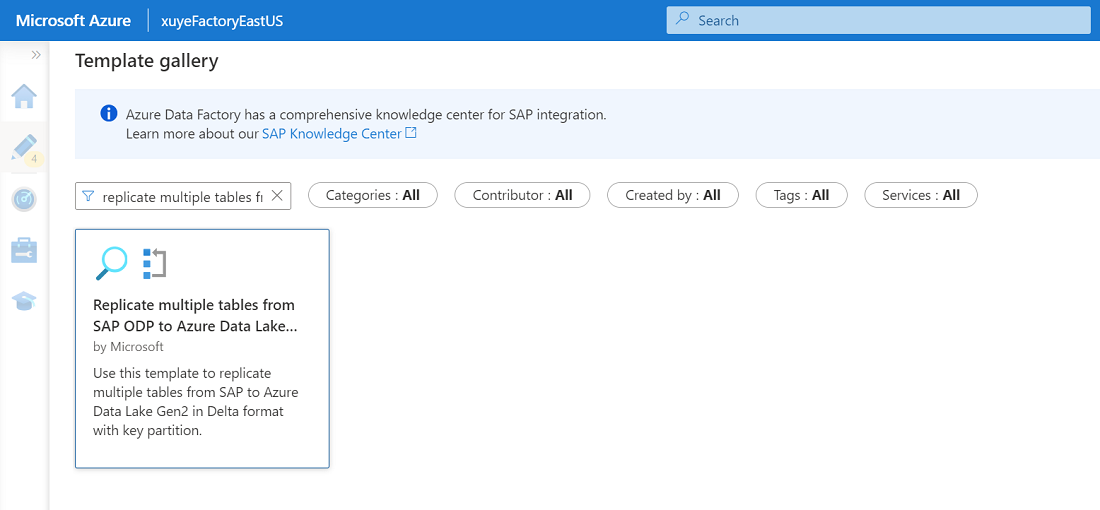

Go to the Replicate multiple tables from SAP ODP to Azure Data Lake Storage Gen2 in Delta format template and click it.

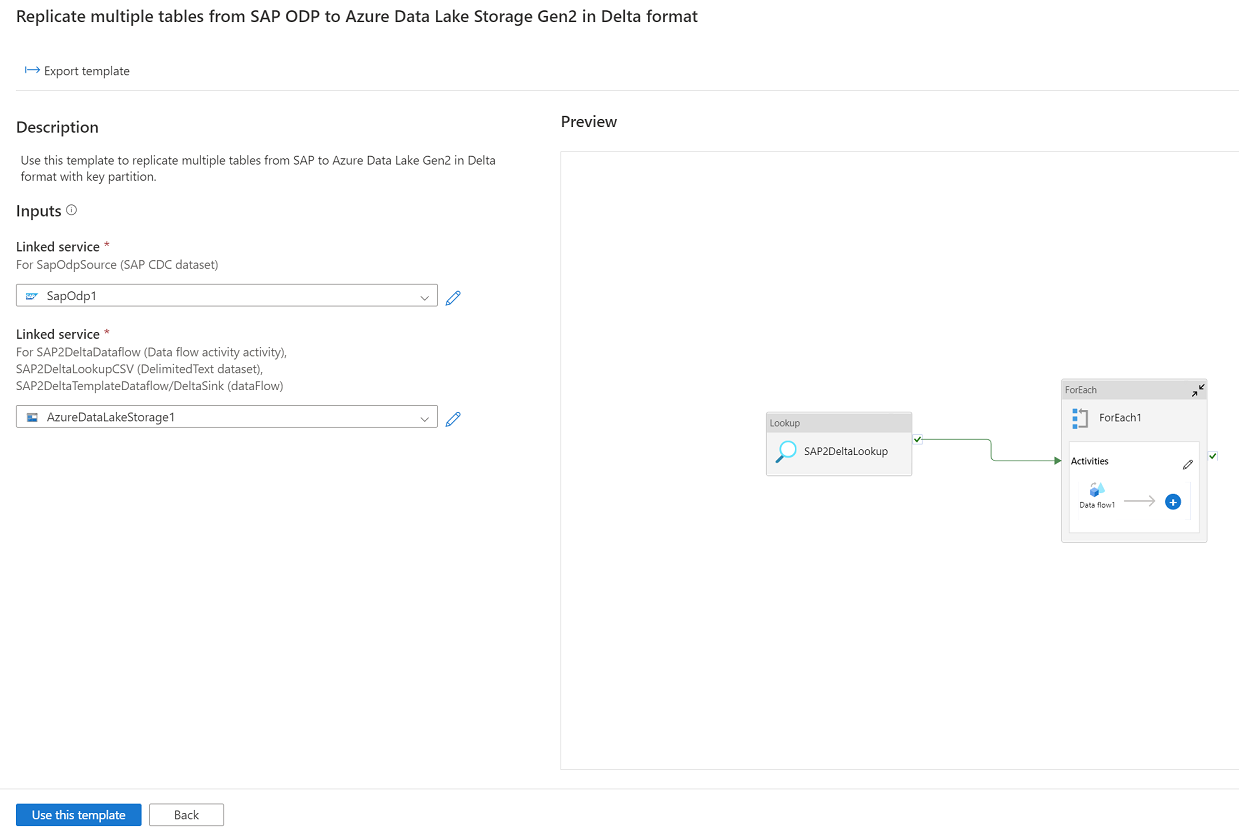

Click Continue and input your linked service connected to SAP system as source, and the linked service connected to Azure Data Lake Gen2 as the destination. You can get more details about SAP CDC linked service from here. Be aware that your external control file should be uploaded to the same account of Azure Data Lake Gen2.

Click Use this template and your will see the pipeline has been ready to use.